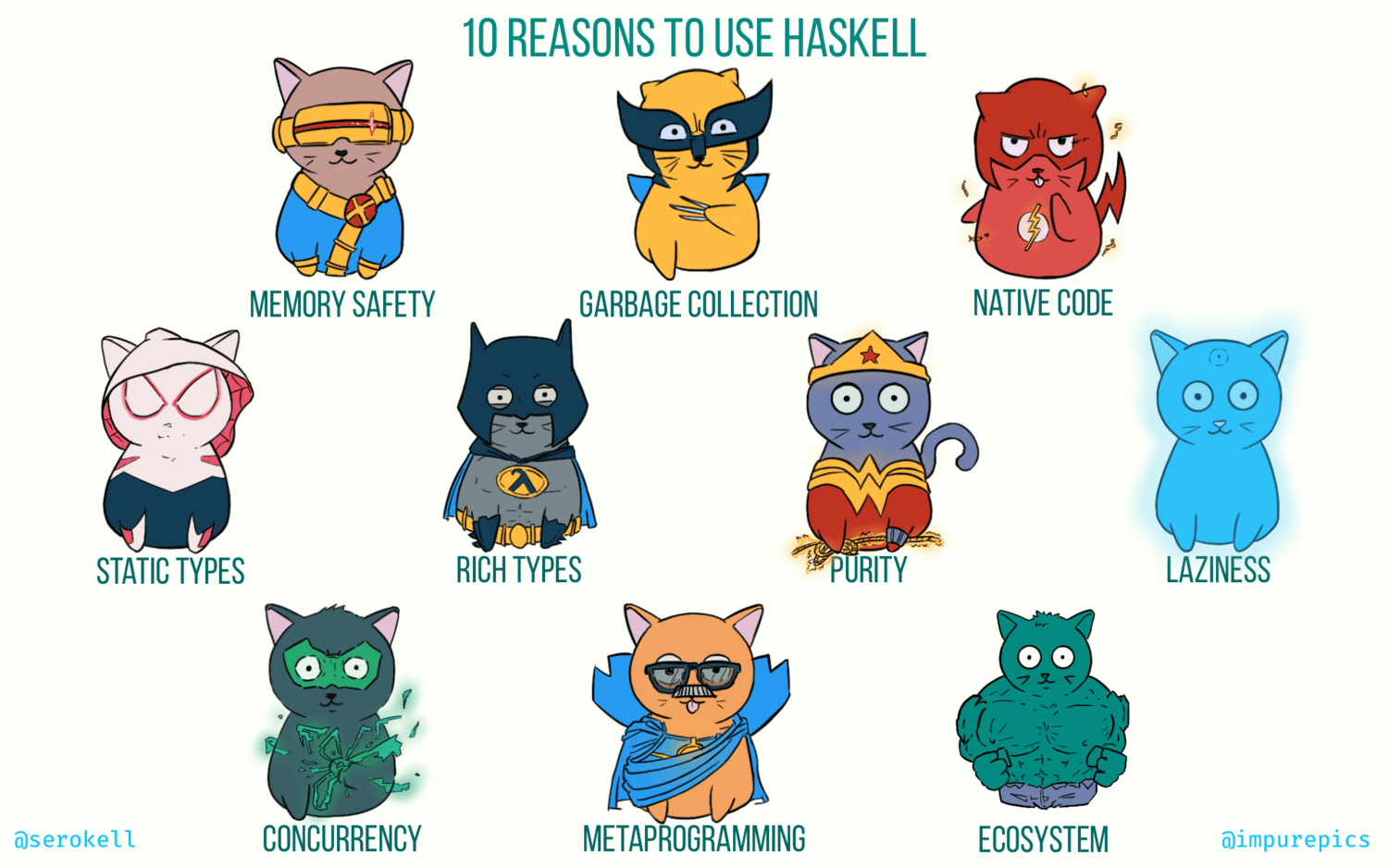

Haskell is a blend of cutting edge research and well-tested, time-proven technology. It occupies a unique position between academia and industry. Some of its features, such as garbage collection and native code generation, can be found in mainstream languages. Other features, such as purity and lazy evaluation, are shared only by less popular, niche languages. In no particular order, here are the 10 notable traits of this programming languages that we as Haskell developers appreciate.

-

Memory safety. Manual memory management in C and C++ often leads to buffer overflows, use-after-free, memory leaks, and other memory-related bugs. This results in security vulnerabilities (see “Observed Examples” for CWE-416: Use After Free). Software written in Haskell is unlikely to exhibit such issues thanks to automatic memory management. Memory safety is a common trait among modern languages, including Java, Python, Go, JavaScript, Rust, and others, and it is absolutely essential for writing secure software.

-

Garbage collection. There are two ways to achieve memory safety: garbage collection (more common) and static lifetime checking (the Rust way). While garbage collection makes Haskell less suited for real time systems, such as computer games, it is less limiting than lifetime checking, thus facilitating better abstractions and higher developer productivity.

-

Native code. Unlike Python, Ruby, JavaScript, Lua, and other interpreted languages, Haskell is compiled ahead-of-time, directly to native machine code. The compiler (GHC) is remarkably good at optimization and generating efficient executables. This makes Haskell a great choice for applications that require good performance, such as high-throughput data processing.

-

Static types. Like Java and unlike JavaScript, Haskell has a type-checker that validates the code during development. This means that many bugs are caught early in the development cycle before the product reaches the users or even the quality assurance department. Furthermore, the developer can study the data model encoded in types to better understand the business domain.

-

Rich types. Unlike Java or Go, where static types often come off as a nuisance, the type system of Haskell is powerful enough to become a convenience. With support for algebraic data types, parametric polymorphism, class-based (ad-hoc) polymorphism, type families, type equalities, existential quantification, higher-rank polymorphism, kind polymorphism, runtime type inspection, Haskell offers an extremely versatile toolset for writing statically typed programs.

-

Purity. Haskell’s design is centered around pure functions and immutable data. Over and over, these features have proven essential for writing correct software. Managing global state, mutable data, and side effects is error-prone, and Haskell gives the programmer all the tools to avoid or minimize these sources of complexity.

-

Laziness. From the very start, Haskell was conceived as a lazy language, and to this day lazy evaluation remains its landmark feature. The idea is to defer computation until the results are needed, and the consequences are ease of refactoring, the ability to define custom control structures, and improved composability.

-

Concurrency. In many languages, concurrency is a never-ending source of issues, but in Haskell it is fairly straightforward. Green threads, amazing libraries such as

asyncandstm, and ubiquity of pure functions make writing concurrent applications in Haskell a pleasure instead of a headache. -

Metaprogramming. Haskell supports the inspection and generation of the program’s abstract syntax tree. This feature is called Template Haskell, and it’s used for compile-time evaluation and to automate boilerplate generation.

-

Ecosystem. Hackage is a centralized repository of open-source Haskell software, featuring over 14000 packages. Stackage is a curated collection of package versions that guarantees compatibility between libraries, featuring over 2000 well-maintained packages. It is not uncommon to find out that the problem you’re solving has already been solved and shipped as an open-source library.

Examples

It’s always hard to illustrate these advantages because they mostly manifest themselves in day-to-day coding when working on large-scale projects. Short, synthetic snippets of code that can fit in an article cannot represent the true value of these language features.

Nevertheless, even a synthetic example is better than none at all, so let’s see how code in Haskell compares to code in other programming languages.

Memory Safety

Modern C++ with heavy use of smart pointers, STL containers, and RAII in general, is less susceptible to memory issues. However, it is still quite easy to mishandle memory using just a few lines of code:

#include <iostream>

void f(int* x);

int main() {

int *x = new int(42);

f(x);

delete x;

return 0;

}

void f(int* x) {

std::cout << *x << std::endl;

delete x;

}

g++ -Wall compiles this code without warnings. The extraneous delete causes a crash. But a crash is a benign manifestation of a memory issue. It’s way worse when it corrupts users’ data or opens up an attack surface. In this trivial example valgrind can detect the issue. Alas, it cannot guarantee that every codepath in a large program is safe.

In Haskell, allocation and deallocation of memory is done by the runtime system, so there’s less room for error. The Foreign.Marshal.Alloc module allows one to manage memory manually, but its use is extremely uncommon (under 4% of packages published on Hackage). Therefore, most Haskell programs are immune to this class of bugs.

Native Code

Python is a high-level interpreted language that emphasizes code readability. For example, here’s a function that implements 1-dimensional Gaussian smoothing:

def smooth(signal, kernel):

smoothed = [0] * len(signal)

half_width = (len(kernel) - 1) // 2

for i in range(len(signal)):

left = max(0, i - half_width)

right = min(i + half_width, len(signal) - 1)

smoothed[i] =

sum(

signal[j] * kernel[half_width - (i - j)]

for j in range(left, right + 1)

)

return smoothed

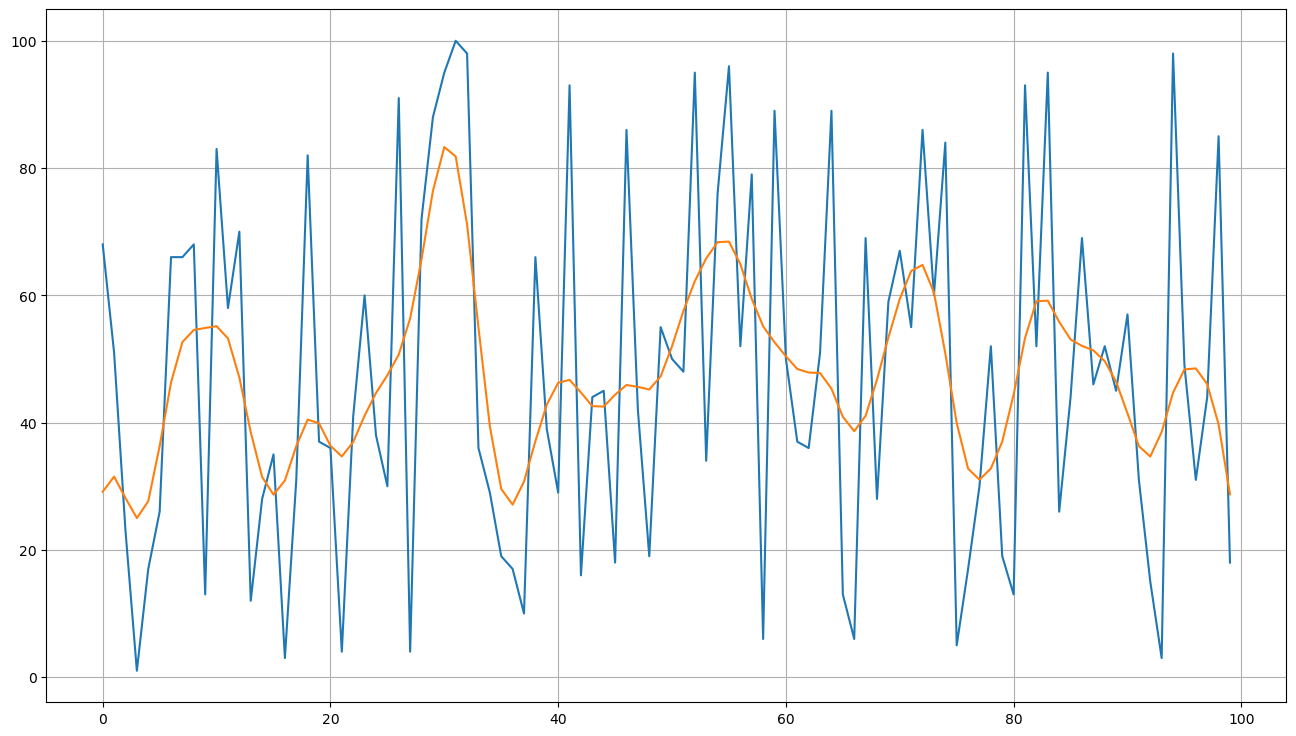

This is achieved with a convolution: we move our kernel along the signal and compute sliding dot products. For each point of the input signal, we try to overlap the kernel and cut off the edges (defaulting the signal to 0 when looking outside the range).

If we measure it with IPython’s default benchmarking tool, %timeit, we get these figures for a signal of length 5000 and a kernel of length 13:

14.6 ms ± 116 µs per loop (mean ± std. dev. of 7 runs, 100 loops each)

Is this fast or slow? Let’s compare to np.convolve from NumPy which is implemented in C:

84.5 µs ± 371 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each)

That’s microseconds instead of milliseconds, an orders-of-magnitude improvement. This means that Python by itself is a poor fit for performance-sensitive code, unless most of the work is offloaded to C functions.

Now let’s see how Haskell fares against Python and C. Oftentimes, Haskell developers use linked lists to represent sequences of values, even though it may not be the best choice of data structure for the task at hand:

smooth :: [Double] -> [Double] -> [Double]

smooth signal kernel =

take signal_length $

map (\t -> take kernel_width t `dot` kernel) $

tails padded

where

signal_length = length signal

kernel_width = length kernel

half_width = (kernel_width - 1) `div` 2

pad = replicate half_width 0

padded = pad <> signal

dot :: [Double] -> [Double] -> Double

dot a b = sum $ zipWith (*) a b

To measure the performance, we will compile with optimizations (-O2) and use criterion, Haskell’s de-facto standard tool for benchmarking:

time 310.1 μs (309.3 μs .. 310.7 μs)

1.000 R² (1.000 R² .. 1.000 R²)

mean 310.2 μs (309.6 μs .. 311.0 μs)

std dev 2.282 μs (1.749 μs .. 3.279 μs)

So, a naive and very simple Haskell implementation outperforms the naive Python code by a factor of 50!

What would it take to get closer to the C implementation? Turns out, not much — we just have to use the right data structure: arrays with slicing provided by the vector package.

smoothVslice :: V.Vector Double -> V.Vector Double -> V.Vector Double

smoothVslice signal kernel = V.generate signal_length calc

where

signal_length = V.length signal

kernel_width = V.length kernel

half_width = (kernel_width - 1) `div` 2

calc :: Int -> Double

calc i = V.sum $ V.zipWith (*) signal_part kernel_part

where

left = max 0 (i - half_width)

right = min (i + half_width) (signal_length - 1)

signal_part = V.slice left (right - left + 1) signal

kernel_part = V.slice (half_width - (i - left)) (right - left + 1) kernel

Now we are 140 times faster than Python, and within 20% of the highly optimized NumPy implementation:

time 107.0 μs (106.8 μs .. 107.2 μs)

1.000 R² (1.000 R² .. 1.000 R²)

mean 106.6 μs (106.4 μs .. 106.8 μs)

std dev 617.6 ns (511.7 ns .. 779.0 ns)

Compared to interpreted languages, Haskell makes it surprisingly easy to get decent performance.

A more detailed exploration of this example is available in an article by Maxim Koltsov.

Static Types

There’s much debate as to whether static types are better than dynamic types, or vice versa. If you are in the dynamic typing camp, perhaps this article by Alexis King might convince you otherwise. And if you prefer static types, you’ll feel right at home writing Haskell.

In any case, the primary and unquestionable advantage of static typing is that it catches some of the bugs before the software is shipped to the users. Testing can only discover the presence of certain unwanted behaviors, whereas types can guarantee their absence.

In JavaScript, even a blatantly incorrect program may have silent bugs:

let v = 0;

if (p) { v.x = "hello"; }

Since v is a number, it does not have any fields, and the assignment has no effect. The two lines may be far apart, so the issue can be hard to spot. And the condition p may be hard to trigger, so the tests may not catch this.

You could try to fight this by achieving 100% test coverage, but even then some issues may go unnoticed, as running code on fixed inputs does not guarantee its correctness on arbitrary inputs.

Alternatively, you could use TypeScript, which helpfully identifies the error before the browser even gets to run this code:

Property 'x' does not exist on type 'number'.

In this regard, Haskell is like TypeScript. The compiler will analyze the code and identify as many issues as it can.

Another advantage of static types is type inference. The compiler can tell you essential information needed to use an API. Let’s say we have the following function:

traverse_ f = foldr ((*>) . f) (pure ())

It’s concise but a bit cryptic. How do you know what it takes as input and what it produces as output? GHCi will report this information:

> :t traverse_

traverse_ :: (Foldable t, Applicative f) => (a -> f b) -> t a -> f ()

Specialise it to concrete types, such as [] and IO, and you get a pretty decent description of how to use it:

traverse_ :: (a -> IO b) -> [a] -> IO ()

Even on an occasion when a Haskell library is poorly documented, it’s often possible to chase the types to figure out how to use it.

Purity

Pure functions are a joy to test and debug. They are deterministic: for equal inputs, they produce equal outputs. And their correctness is composable: if you verified that pure functions f and g produce correct results, then you don’t have to worry that using them together might affect their behavior due to shared mutable state.

This empowers us to reason about parts of the system in isolation. And it allows us to use property-based testing, one of the most powerful testing techniques. The idea is to apply pure functions to random inputs and verify their results.

Say we are implementing a sorting algorithm:

sort :: [Integer] -> [Integer]

No matter how complex the implementation, we can devise a few simple tests to check it:

- No elements are lost: every element of the input must also occur in the output.

- No elements are introduced: every element of the output must also occur in the input.

- The length of the output must be equal to the length of the input.

- The output is sorted: every element is less than or equal to the next one.

With these properties in mind, we define the following test:

prop_sort_valid :: [Integer] -> Bool

prop_sort_valid xs = no_lost && no_introduced && eq_len && is_sorted

where

sorted = sort xs

no_lost = all (\x -> elem x sorted) xs

no_introduced = all (\y -> elem y xs) sorted

eq_len = length xs == length sorted

is_sorted = isSorted sorted

isSorted [] = True

isSorted [x] = True

isSorted (x : y : xs) = x <= y && isSorted (y : xs)

Using the QuickCheck library, we can generate random inputs to check this property:

main = quickCheck prop_sort_valid

Running this test will produce a report:

+++ OK, passed 100 tests.

Due to the randomized nature of property-based tests, they tend to reveal unexpected corner cases. And while it’s true that one can write pure functions in any language, Haskell encourages it, and the culture around it is built around purity.

Laziness

In Haskell, it is always valid to factor out subexpressions as a form of refactoring. Consider this program:

main = do

n <- readLn

if even n

then print (countDigits (product [1..n]))

else return ()

countDigits :: Natural -> Int

countDigits n = if n < 10 then 1 else 1 + countDigits (n `quot` 10)

We get a number from the user, and if it’s even, perform an expensive computation; if it’s odd, do nothing. Skipping the computation can save us a lot of time:

$ echo 100000 | time ./compute

456574

./compute 19.79s user 0.11s system 99% cpu 19.901 total

$ echo 100001 | time ./compute

./compute 0.00s user 0.00s system 90% cpu 0.001 total

Of course, this is only possible because if then else is computed lazily: the then branch is evaluated only when the condition holds, and the else branch is only evaluated when the condition does not hold. Even in eager (strict) languages, there are often built-in lazy operators available, such as && and || in C++. This is also known as short-circuit evaluation.

The remarkable property of Haskell is that user-defined functions also exhibit this behavior. Let’s factor out our check into a custom function, whenEven:

main = do

n <- readLn

whenEven n (print (countDigits (product [1..n])))

whenEven n action = if even n then action else return ()

In an eager language, this simple refactor would lead to a major performance degradation. The expensive computation would be always performed before the whenEven call, even though its result is not needed half the time.

There are eager languages that claim to support functional programming, including OCaml, PureScript, Scala, Idris, and so on. But what sort of functional language doesn’t let you decompose your code into functions without losing performance?

In Haskell, we can focus on readability when structuring the code. We can even move the expensive computation entirely outside the whenEven call:

main = do

n <- readLn

let p = countDigits (product [1..n])

whenEven n (print p)

Furthermore, laziness is basically a prerequisite for writing modular, reusable code. Consider these functions in Haskell:

map f [] = []

map f (x : xs) = f x : map f xs

and [] = True

and (x : xs) = x && and xs

all f = and . map f

map applies a function to every list element. and checks if every element is True. Their composition yields all, a function that checks that every element satisfies a certain condition (predicate).

Notably, due to the short-circuiting properties of &&, the and function will stop as soon as it encounters the first False value. And thanks to lazy evaluation, this property carries over to all:

main = print (all expensive_check [x1, x2, x3, ..., x10000])

Say expensive_check x3 yields False, then checking thousands of other elements will be avoided. To preserve these computational properties in an eager language, we would have to write all from scratch:

all f [] = True

all f (x : xs) = f x && all f xs

This means that the most basic notions of functional programming, such as function composition or folding over a list, require lazy evaluation to work properly.

A more detailed exploration of this topic can be found in an excellent article by Lennart Augustsson.

Concurrency

In Haskell, the runtime system implements various primitives for concurrent programming, such as MVar. But often you will find yourself using high-level combinators, such as mapConcurrently from the async package:

mapConcurrently :: (a -> IO b) -> [a] -> IO [b]

-- specialised to lists

This function will process each list element in its own thread:

main =

mapConcurrently putStrLn

[ "hello",

"this",

"is",

"concurrent" ]

Running this program will produce various lovely outputs, such as this:

hellticohso

i

nsc

urrent

As you can imagine, instead of printing to stdout we could make HTTP requests or perform any other actions.

For a more detailed overview of async and stm, check out the article by Michael Snoyman.

Metaprogramming

Haskell has a built-in mechanism for code generation, deriving:

data Color = Black | Red | White

deriving Eq

This will generate the following instance:

instance Eq Color where

Black == Black = True

Red == Red = True

White == White = True

_ == _ = False

This is a powerful mechanism that can derive instances of Show, Eq, Ord, Functor, Foldable, Traversable, and other classes. However, it is not always sufficient.

A simple example of something GHC is unable to generate is JSON encoders and decoders. However, there is a library called aeson which, with the help of Template Haskell, can automatically derive ToJSON and FromJSON instances:

data NumberRange = NumberRange { range_from :: Integer, range_to :: Integer }

deriveJSON (JSON.defaultOptions { JSON.fieldLabelModifier = drop 6 }) ''NumberRange

The second line generates the following instances, thereby saving us from having to write them by hand:

instance NumberRange where

toJSON (NumberRange arg1 arg2) =

fromPairs (pair "from" (toJSON arg1) <> pair "to" (toJSON arg2))

toEncoding (NumberRange arg1 arg2) =

fromPairs (pair "from" (toEncoding arg1) <> pair "to" (toEncoding arg2))

instance NumberRange where

parseJSON (Object recObj) =

NumberRange

<$> (lookupField parseJSON "Main.NumberRange" "NumberRange" recObj)

(Data.Text.pack "from")

<*> (lookupField parseJSON "Main.NumberRange" "NumberRange" recObj)

(Data.Text.pack "to")

parseJSON other =

parseTypeMismatch' "NumberRange" "Main.NumberRange" "Object"

(valueConName other)

deriveJSON is defined in Haskell, not in any special meta-language. This means we can write Haskell code that generates other Haskell code.

For a more involved example, a common way to manipulate data in Haskell is by using optics. Optics come in many forms: getters, setters, folds, traversals, lenses, prisms, isomorphisms, and so on. Unfortunately, the compiler does not generate optics for data types:

data Person = MkPerson { _name :: String, _uuid :: UUID }

name :: Functor f => (String -> f String) -> Person -> f Person

name f s = (\b -> s { _name = b }) <$> f (_name s)

uuid :: Functor f => (UUID -> f UUID) -> Person -> f Person

uuid f s = (\b -> s { _uuid = b }) <$> f (_uuid s)

Fortunately, instead of writing the name and uuid lenses by hand, we can use a Template Haskell function from the lens package:

data Person = MkPerson { _name :: String, _uuid :: UUID }

makeLenses ''Person

For another example, consider singleton types, which are a way of modeling dependent types in Haskell. For any data type, we must define a corresponding singleton type:

data Nat = Zero | Succ Nat

data SNat (n :: Nat) where

SZero :: SNat Zero

SSucc :: SNat n -> SNat (Succ n)

To avoid this boilerplate, the singletons package provides a Template Haskell function that does this automatically:

data Nat = Zero | Succ Nat

genSingletons [''Nat]

Aside from code generation, this feature can be used for compile-time evaluation:

x = $(lift (sum [1..100]))

This is equivalent to defining x = 5050.

Ecosystem

Haskell has been around for quite some time and has accumulated a wealth of libraries. Here’s a microservice for generating random numbers, with a JSON API, remote monitoring, and command-line configuration:

{-# LANGUAGE NamedFieldPuns, ApplicativeDo, TemplateHaskell,

DataKinds, TypeOperators, TypeApplications,

OverloadedStrings #-}

import qualified Options.Applicative as CLI

import Data.Aeson.TH (deriveJSON)

import qualified Data.Aeson.TH as JSON

import Control.Applicative

import Servant

import Network.Wai.Handler.Warp

import qualified System.Remote.Monitoring as EKG

import System.Random

import Control.Monad.IO.Class

data ConfigCLI =

ConfigCLI { config_ekg_port :: Int, config_port :: Int }

configCLI :: CLI.Parser ConfigCLI

configCLI = do

config_ekg_port <- CLI.option CLI.auto $

CLI.long "ekg-port" <> CLI.metavar "NNNN"

config_port <- CLI.option CLI.auto $

CLI.long "port" <> CLI.metavar "NNNN"

pure ConfigCLI{config_ekg_port, config_port}

data NumberRange =

NumberRange { range_from :: Integer, range_to :: Integer }

deriveJSON (JSON.defaultOptions { JSON.fieldLabelModifier = drop 6 }) ''NumberRange

type RandomGenAPI =

"random" :> ReqBody '[JSON] NumberRange :> Post '[JSON] Integer

randomGenAPI = Proxy @RandomGenAPI

randomGenServer :: Server RandomGenAPI

randomGenServer = \r -> liftIO $ randomRIO (range_from r, range_to r)

main :: IO ()

main = do

ConfigCLI{config_ekg_port, config_port} <-

CLI.execParser $

CLI.info (configCLI <**> CLI.helper)

(CLI.fullDesc <> CLI.header "Random Numbers Microservice")

EKG.forkServer "localhost" config_ekg_port

run config_port (serve randomGenAPI randomGenServer)

In under 50 lines of code, a third of which is imports, we implemented a web service. Running it without parameters tells the user about its command-line configuration flags:

$ random-web-service

Missing: --ekg-port NNNN --port NNNN

Say we run it as random-web-service --ekg-port 8000 --port 8080. Now we can check its memory usage, allocation rate, productivity, and other metrics, by opening http://localhost:8000 in the browser.

And we can test its functionality by sending a few queries with curl:

$ curl --header "Content-Type: application/json" --request POST --data '{ "from": 4, "to": 10 }' http://localhost:8080/random

5

$ curl --header "Content-Type: application/json" --request POST --data '{ "from": 4, "to": 10 }' http://localhost:8080/random

9

This example is hardly impressive if you’re coming from a mainstream language. But it shows that Haskell has the libraries needed for writing actual applications. That’s an area where academic and research languages are often lacking.

For a detailed overview of the Haskell ecosystem, check out the article by Gabriel Gonzalez.

Conclusion

Haskell is the main technology that helps us deliver high quality software. There are various criteria to judge software quality, but the most important ones are correctness, performance, and maintainability. Haskell facilitates writing code that scores high on all of these accounts:

- Correctness. Strong static typing, purity, and immutable data, are essential for writing code that adheres to specifications. Software written in Haskell tends to be secure, reliable, and bug-free.

- Performance. GHC (The Glasgow Haskell Compiler) generates optimized, native executables. Its runtime system supports green threads and ships with a multi-generational garbage collector. Haskell is a perfect choice for high-load concurrent applications, such as web backends.

- Maintainability. Haskell encourages using the type system to model the business domain and making the assumptions explicit. As a result, refactoring the code and adapting it to changing requirements is much easier.

Don’t hesitate to let us know what you think about Haskell’s advantages and disadvantages! Do you use it? Why, or why not?

.png)

.jpg)