Generative artificial intelligence has transformed many industries from content creation to healthcare and fintech. Because the use of generative AI has become so widespread, it introduced certain challenges for the cybersecurity of individuals and whole corporations. McKinsey Global Survey on AI shows that 40 percent of organizations plan to increase their overall AI investment because of advancements in generative AI. At the same time, 53 percent of organizations acknowledge cybersecurity as a generative AI-related risk.

In this post, we discuss why many see generative AI as a threat to cybersecurity, as well as how you can mitigate common security risks.

What makes generative AI special in terms of cybersecurity?

Generative AI (GenAI) is a technology that uses artificial intelligence (namely, generative adversarial neural networks, or GANs) to generate new textual, visual, and video content. The use of generative AI can have both positive and negative implications for cybersecurity.

Negative aspects

Generative AI can be used for cyberattacks and fraud. Some of the common uses of GenAI by offenders are:

- Sophisticated malware. Generative AI can be used to generate new and sophisticated forms of malware. Adversarial actors could leverage these models to create malicious code that is more challenging to detect by traditional cybersecurity tools.

- Social engineering. AI-generated content can be used to create convincing phishing emails, websites, or messages. This makes it harder for users to distinguish between legitimate and malicious communications.

- Detection evasion. GenAI is becoming better and better at evading detection. Generative models can be trained to generate examples that are specifically designed to evade detection by security systems. Adversarial attacks can exploit vulnerabilities in AI-based security mechanisms, leading to misclassifications or false negatives.

- Deepfakes. While not exclusive to cybersecurity, the generation of deepfakes using generative AI poses privacy risks. Deepfakes can be used to impersonate individuals, leading to social engineering attacks or the spread of false information. Generative models can be used to create synthetic images or videos that can fool biometric systems, such as facial recognition or fingerprint scanners.

Positive aspects

Cybersecurity professionals can leverage GenAI to protect their organizations from criminals:

- Red teaming exercise. Generative AI can be used by cybersecurity professionals for red teaming exercises. It helps them simulate realistic attack scenarios to test the effectiveness of their organization’s security infrastructure.

- Vulnerability detection. AI can also assist in identifying vulnerabilities in a system by automatically generating and testing potential attack vectors. This aids in strengthening defenses against known and potential threats.

- Anomaly detection. Generative models can be employed to learn normal patterns of network behavior. Deviations from these patterns can be flagged as potential security threats, enabling quicker detection of anomalous activities indicative of a security breach.

It’s important to note that the same technology that can be exploited for malicious purposes can also be used to enhance cybersecurity defenses. Generative AI can be harnessed to create more robust intrusion detection systems, improve anomaly detection, and aid in the development of advanced security mechanisms. The ongoing challenge is to stay ahead of potential threats and continually adapt security measures to address the evolving AI-driven cyber threats.

Top GenAI cybersecurity threats for organizations

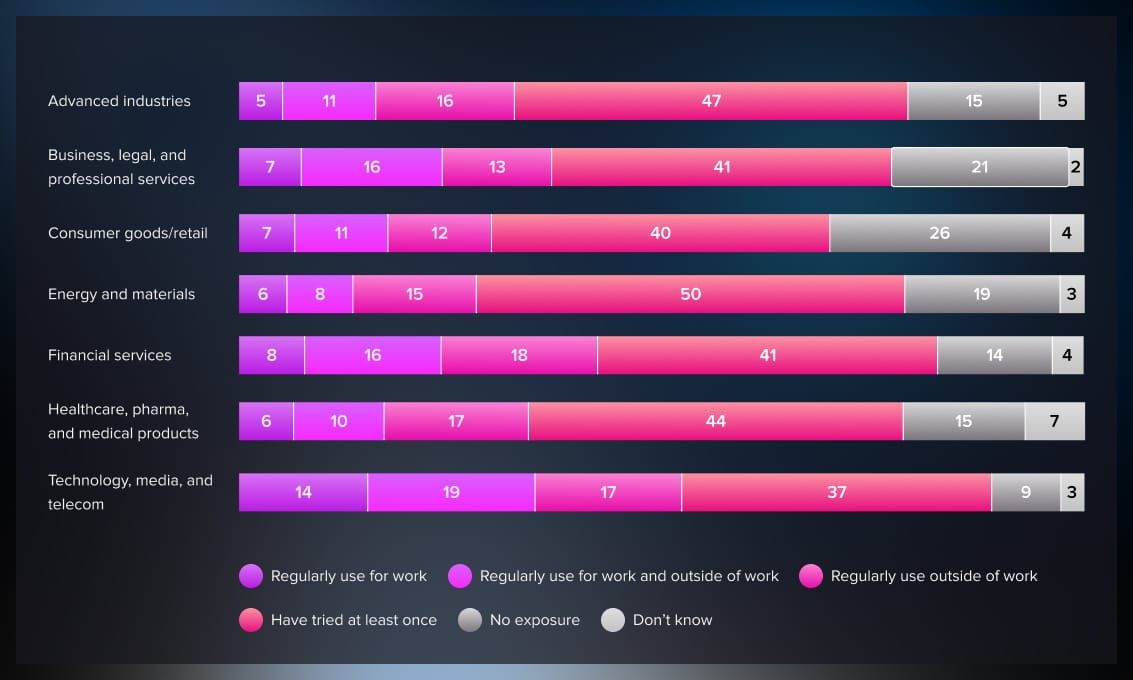

Here’s a visual overview of the most significant cybersecurity threats, according to a global survey by McKinsey:

Source: McKinsey

There are several issues with generative AI systems that make them dangerous for organizations.

Data loss

When employees at an organization use generative AI to perform day-to-day tasks, they often disclose personal and confidential information valuable to attackers. The same McKinsey Survey also reported that in 2023, one-third of our survey respondents say their organizations are using GenAI regularly in at least one business function, and at least 22 percent say they are regularly using these tools in their own work.

One of these tools is, of course, ChatGPT. Because of its popularity, hackers often target ChatGPT to uncover sensitive data of companies and individuals. Examples of such data are customer data, trade secrets, classified information, and even intellectual property. For example, it happened with a group of Samsung employees who inputted source code of one of the newer products into ChatGPT to see if the chatbot could suggest a more efficient solution. This resulted in Samsung banning the use of the tool in the workplace to prevent further cases of data leakage.

IP leaks

An IP (Internet Protocol) leak refers to the unintended exposure or disclosure of a user’s real IP address, which can potentially compromise their privacy and security. The IP address is a unique identifier assigned to the user’s device when it connects to the internet. It can reveal information about their geographical location and, in some cases, identity.

When using generative AI or any online service, there are several ways through which an IP address can be leaked:

- API calls. If a generative AI model requires an internet connection and interacts with a server through API (Application Programming Interface) calls, the server may log the IP addresses of users making those requests. If the API is not properly secured, this information could be exposed.

- External dependencies. Generative AI models often rely on external services or libraries. If these external components are not configured correctly or if they log IP addresses, there’s a potential for IP leakage.

- Metadata in requests. When users submit data for processing by a generative AI model, the requests sent to the server may contain metadata that includes the user’s IP address. If this information is not handled securely, it could be leaked.

- Domain Name System (DNS). DNS translates human-readable domain names into IP addresses. Sometimes, DNS requests can bypass the VPN or proxy used to mask the user’s IP address, leading to a leak.

- Web Real-Time Communication (WebRTC). WebRTC is a technology that enables real-time communication in web browsers. If a browser has WebRTC vulnerabilities, it may inadvertently leak the user’s IP address, especially when using peer-to-peer connections.

- Malicious scripts. Malicious scripts on websites or within generative AI applications could be designed to extract and transmit the user’s IP address without their knowledge.

To prevent IP leakage, it’s important to use VPN, disable WebRTC, and check configuration settings.

Data training

Data training for generative AI models involves using large datasets to train the model to generate new content, such as images, text, or other forms of media. While data training is crucial for the development of powerful and accurate generative models, it also poses potential security threats, particularly when dealing with sensitive or personal information.

Since generative AI uses user data to learn and improve, sophisticated attackers might attempt to reverse-engineer or reconstruct parts of the training data by analyzing the generated content. This could be used to extract information about individuals present in the training dataset.

Attackers may also attempt model inversion attacks to extract information about the training data by inputting specific queries and analyzing the model’s responses. This can reveal details about individuals or data present in the training set.

To mitigate these security threats, carefully curate and sanitize any data that you input to remove any sensitive or personally identifiable information.

Synthetic data

While synthetic data can be a valuable tool for training generative AI models, there are potential security threats associated with its use. Synthetic data refers to artificially generated data that mimics the characteristics of real data.

The generation of synthetic data might inadvertently capture patterns or relationships present in sensitive or confidential information. This could result in synthetic data that, while not directly revealing the original data, still carries privacy risks.

Adversaries might employ membership inference attacks to determine whether a particular individual or instance was part of the original training data used to generate synthetic data. This could have privacy implications for individuals represented in the synthetic dataset.

To mitigate risks associated with the use of synthetic data, you should implement the same security precautions as when treating your confidential data in general.

As with any data-related technology, careful consideration and proactive measures are essential to ensure the responsible and secure use of synthetic data in the context of generative AI models.

Social engineering

Generative AI is capable of not only producing extremely convincing texts, images, and videos that imitate real humans but also adapt to the situation to a certain degree. This makes them an extremely powerful tool for social engineering―all types of cybersecurity attacks that rely on human emotions such as fear, curiosity, or greed to exhort private information from them.

A recently published research analysis by Darktrace revealed a 135% increase in social engineering attacks using generative AI. For example, experts have warned about a rise of voice scams where attackers use the voices of loved ones and colleagues to mislead their victims. Replicating one’s voice has become a trivial task. While it might seem that social engineering is a relatively easy attack to avoid, in reality, according to Splunk, 98% of cyberattacks rely on social engineering and 90% of data breach incidents target the human element to gain access to sensitive business information.

Conclusion

Generative AI can be both a threat to organizational cybersecurity and a means to improve the situation and the skills of cybersecurity teams. Both its negative and positive aspects shouldn’t be overlooked by cybersecurity professionals.

If you enjoyed this article and would like to continue learning about AI and cybersecurity, check out these materials.

Read more:

.jpg)

.jpg)

.jpg)

.jpg)