Modern cloud native computing heavily relies on the use of containers and the adoption of Kubernetes. Despite being a relatively new technology, it is deployed by many global enterprises to manage business-critical applications in their production environments. The popularity of Kubernetes is driven by a growing range of features, such as enhanced security, better management of microservices, improved observability, and more efficient scaling and resource use.

In this article, we take a look at the essence of technology, its architecture, and its real-world applications.

What is Kubernetes?

Kubernetes, also known as k8s, is an open-source container orchestration platform developed by Google Lab in 2014. It automates the deployment, scaling, and management of applications housed in containers. Kubernetes allows multiple containers to operate on the same machine and also facilitates the management of container-based applications across multiple machines, which can include various environments such as physical servers, virtual machines, and cloud-native applications.

Kubernetes is not a conventional PaaS system, that is a cloud computing model that provides developers with a platform and environment to build, deploy, and manage applications. It offers key PaaS-like features, including deployment, scaling, and load balancing. Additionally, it enables users to incorporate their own logging, monitoring, and alerting solutions. Kubernetes is modular, not monolithic; it provides optional and interchangeable default solutions. It offers the essential components for building developer platforms while preserving user choice and flexibility in critical areas. Note, however, that Kubernetes is open source, and as such, it has no formal support structure.

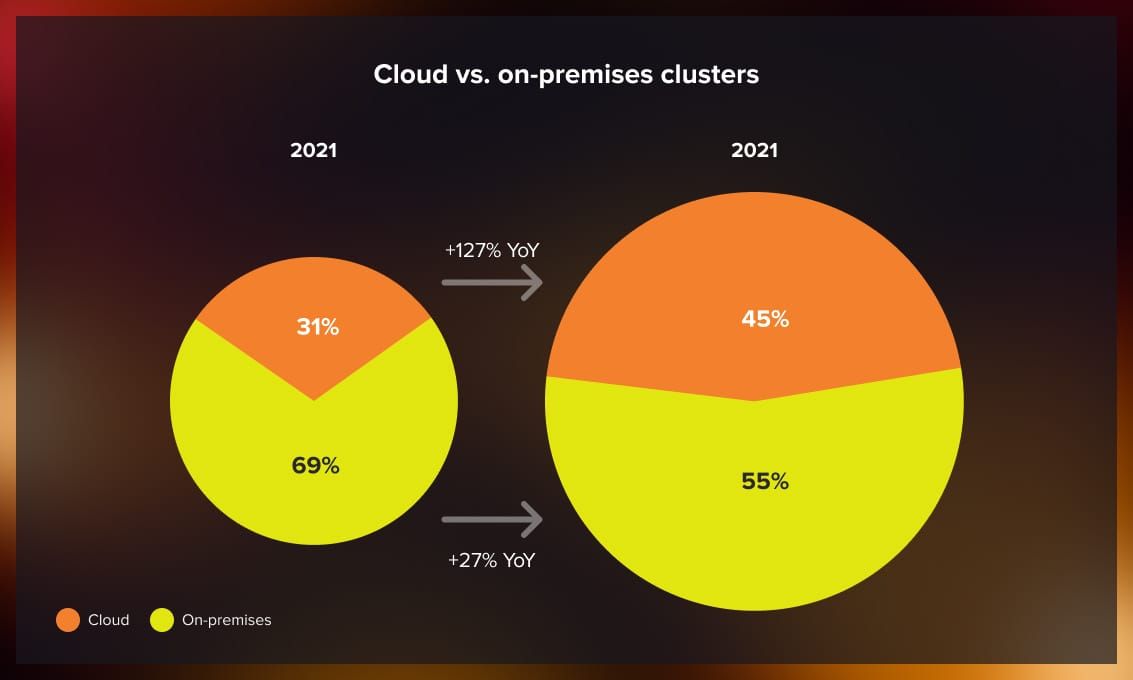

According to Dynatrace, Kubernetes became the preferred platform for migrating workloads to the public cloud in 2022. With an annual growth rate of 127%, the number of Kubernetes clusters in the cloud expanded about five times faster than those hosted on-premises. Additionally, the proportion of clusters hosted in the cloud rose from 31% in 2021 to 45% in 2022.

Source: Dynatrace

Source: Dynatrace

What problems does Kubernetes solve?

To better understand the practical purposes of Kubernetes, let’s consider two real-world examples.

Scenario 1: Standalone application deployment

Imagine deploying a Java application by containerizing it and running it on a server equipped with a Docker engine. Here, you would package your application in a Docker image, specify a Dockerfile, and open a host port for external access. However, such a setup risks failure if it relies solely on a single server.

Kubernetes addresses the single-server vulnerability by enabling on-demand application scaling and resilience against single-node failures. Additionally, it facilitates application self-healing and enables rolling updates, which are crucial for robust container management.

Scenario 2: Complex microservices deployment

Consider a larger application divided into various microservices (such as UI, user management, and transaction processing). These components must communicate via REST APIs or other protocols and cannot be efficiently managed on a single server. The complexity increases with the need for separate deployment and scaling of each microservice.

Kubernetes simplifies the orchestration of these complex processes, accelerating development and deployment while ensuring system robustness. It efficiently handles networking, shared file systems, load balancing, and service discovery, among other tasks.

What are the benefits?

The overall benefits of Kubernetes can be summed up as follows:

-

Automation: Streamlines the scaling, deployment, and management processes of applications, enhancing productivity for developers and enhancing application performance.

-

Load balancing: Implements automatic load distribution to manage traffic across available replicas, which helps maintain consistent operations and prevents any single replica from becoming overloaded.

-

Self-healing: Automatically recovers from failures.

-

Automatic container scheduling: Ensures optimal placement and management of containers.

-

Scalability: Supports both horizontal and vertical scaling methods.

-

Portability: Facilitates smooth transitions of applications between on-premises and cloud platforms, enabling migrations to and from cloud services without significant modifications.

-

Continuous deployment: Facilitates rolling updates and downgrades with zero downtime, ensuring continuous service availability.

-

Ecosystem: Has a comprehensive set of extensions, plugins, and tools, allowing for efficient management and deployment of applications with additional support for logging, monitoring, security, and troubleshooting.

Kubernetes architecture

The Kubernetes architecture is designed to automatically handle failover, scaling, deployment patterns, and more.

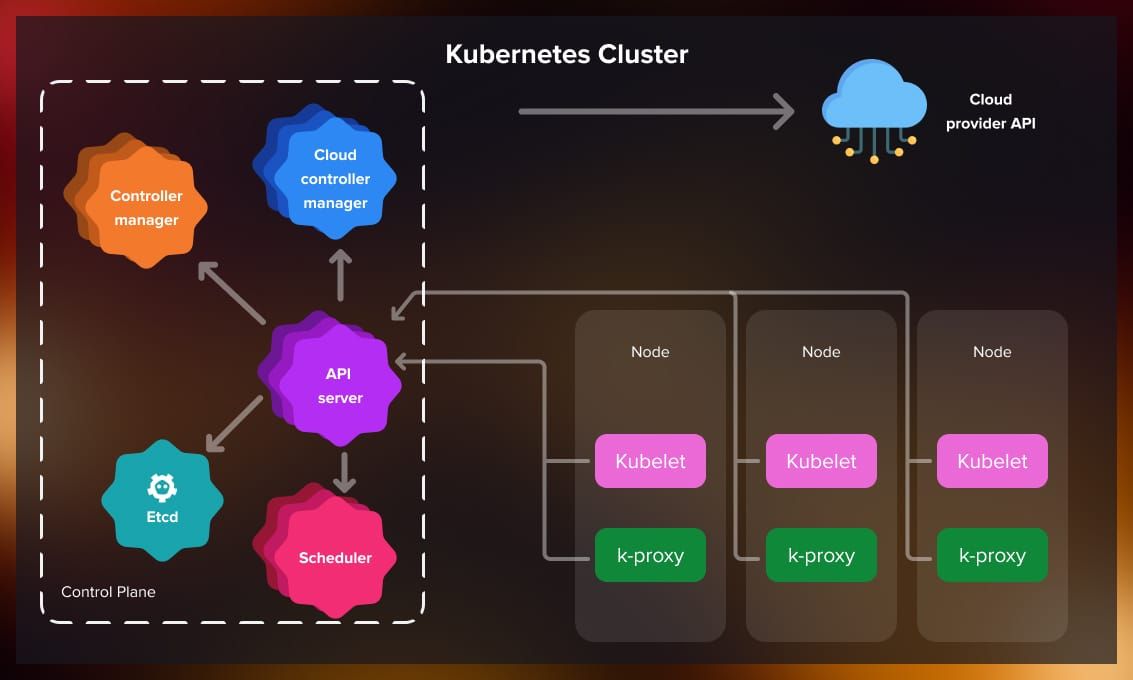

Cluster architecture

Kubernetes employs a client-server architecture with master and worker nodes. The master node runs the Control Plane. Typically, a production cluster has multiple master nodes operating in “high availability” mode to mitigate potential downtime of a single master node.

Components

A Kubernetes cluster consists of worker machines known as Nodes and a Control Plane. Each cluster must have at least one worker node. The Kubectl command-line interface (CLI) interacts with the Control Plane, which in turn manages the worker nodes.

- Nodes: The worker nodes are crucial for the Kubernetes cluster, as they host the containers where applications are deployed.

- Control plane oversees the worker nodes and the pods they contain.

Control plane

The control plane is a set of components that manage the cluster’s overall health, such as setting up new pods, destroying old pods, or scaling existing pods. Its key components include:

- Kube-API Server: This is the entry point to the cluster, exposing the Kubernetes API. It processes requests from the Kubectl CLI, validates them, and then forwards them to the appropriate components. All requests must go through the API Server.

- Kube-Scheduler: When the API Server receives a scheduling request, it is passed to the Scheduler, which determines the most efficient node for the pod.

- Kube-Controller-Manager: This component runs various controllers that manage the cluster’s control loops. These include the replication controller for maintaining the desired number of application replicas and the node controller for monitoring node health.

- Etcd: Stores the desired state of the cluster, while the Scheduler makes the actual state match the desired. The interaction between etcd, the Scheduler and other components about resource availability and other state changes happens through the Kube-API server.

Node components

Nodes are where the real work happens, and each can host multiple pods with containers inside. The key node processes include:

- Container runtime: Necessary for running the application containers in the pods, such as Docker.

- kubelet: Acts as a bridge between the Node and the container runtime, responsible for starting the pods and containers.

- kube-proxy: Manages network traffic to the pods from external sources, ensuring requests are forwarded correctly.

Addons and Plugins

Add-ons utilize Kubernetes resources such as Daemonset, Deployment, and others to implement cluster features. They are managed as cluster-level resources within the Kube-system namespace. Popular add-ons include CoreDNS, KuberVirt, ACI, and Calico.

Watch this video for a recap of the Kubernetes structure:

Resources, controllers, operators

Now, let’s examine Kubernetes processes. To do this, we need to understand three core concepts: resources, controllers, and operators.

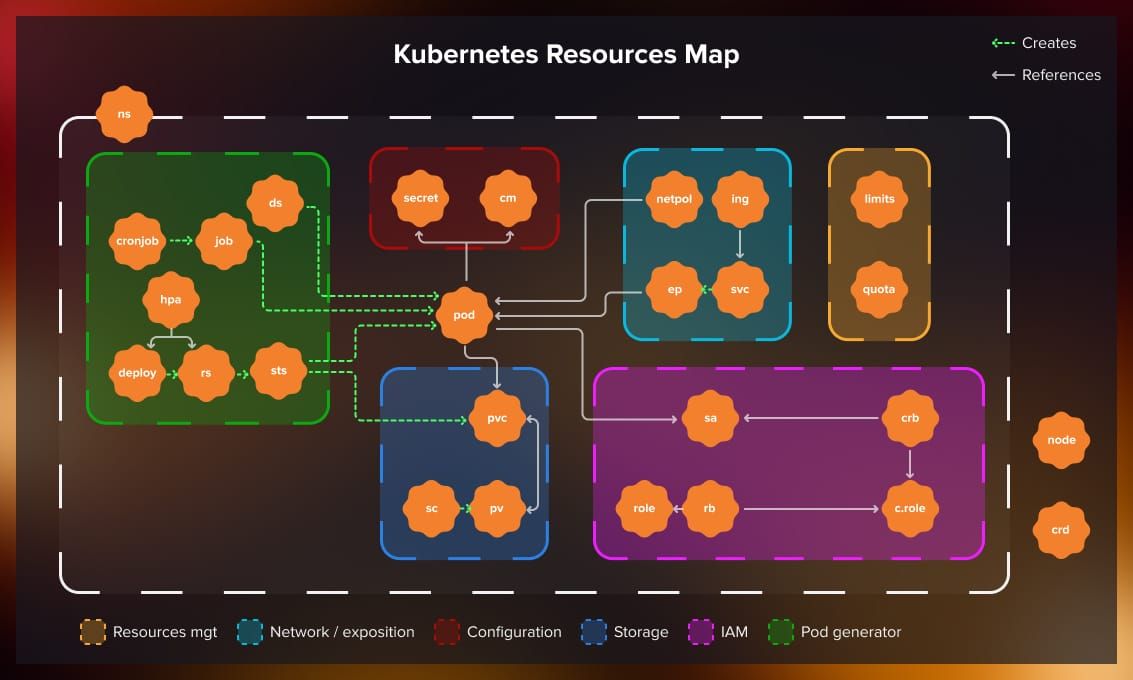

Resources

A “Resource” is an entity that represents the state of the cluster or a part of it. There are two types of resources:

- Built-in resources: These include predefined objects like Pods, Services, Deployments, and PersistentVolumes, which are integral for configuring and managing your containerized applications within Kubernetes.

- Custom resources: Custom resources are extensions of the Kubernetes API that are not available by default. They allow users to create their own APIs that can be managed by the orchestration platform. This is often used to introduce new functionality without having to build a completely new Kubernetes version.

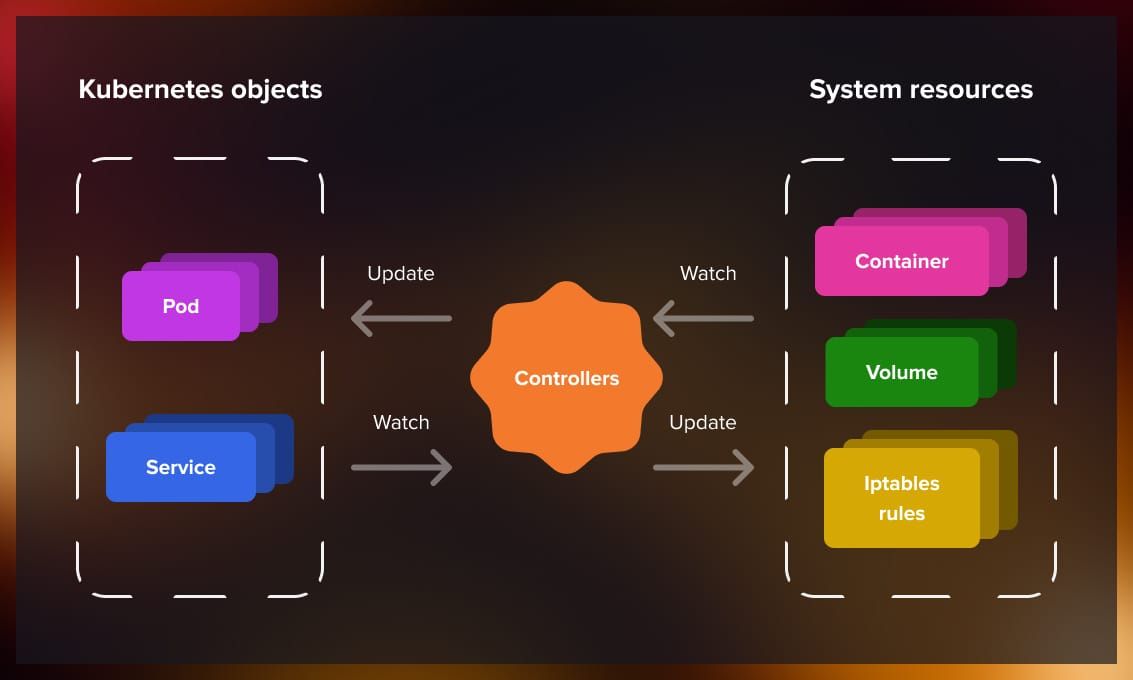

Controllers

Controllers are the Kubernetes brain and are integral to its self-healing capability. They are control loops that watch the state of resources in the cluster and make or request changes where needed. Controllers work to bring the current state of the cluster closer to the desired state. Some common controllers include:

- Deployment and ReplicaSet: They ensure that a certain number of pod replicas are running at any given time and manage a stateless application by updating Pods and ReplicaSets.

- StatefulSet: It handles the deployment and scaling of Pods and guarantees their uniqueness. This controller should be used for stateful applications that employ resources like persistent storage as opposed to Deployment/ReplicaSet.

- DaemonSet: Ensures that all (or some) Nodes run a copy of a Pod. This controller is typically used to run logging and/or monitoring daemons on the nodes.

- Job: Manages a task that runs to completion.

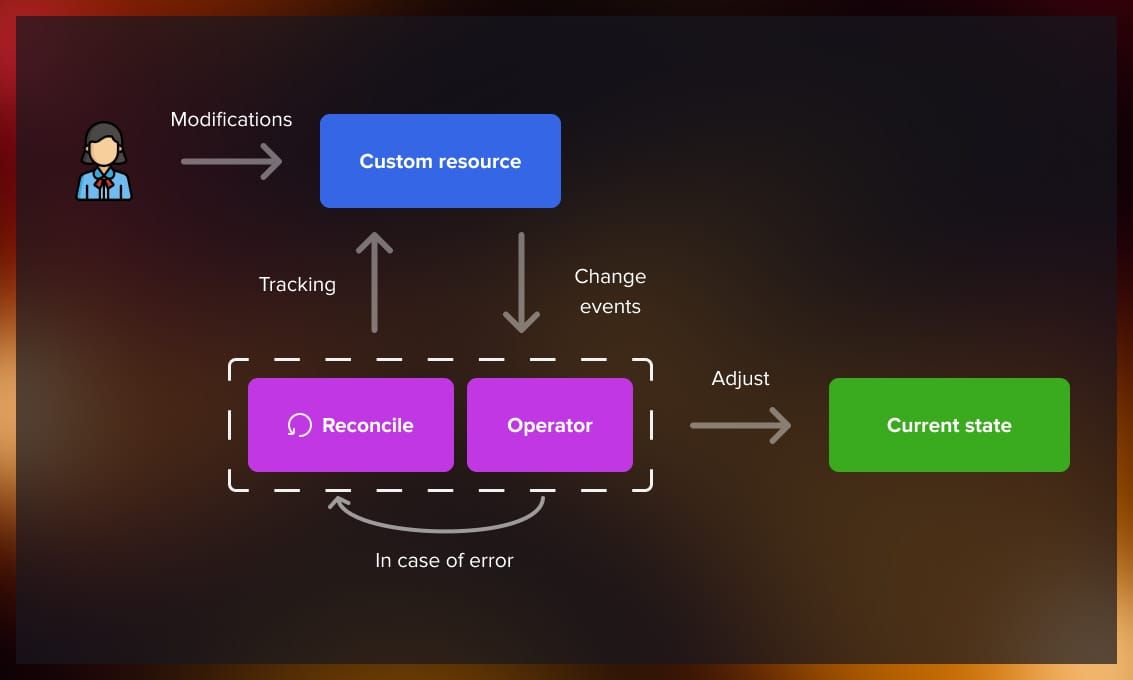

Operators

Operators are a way of extending Kubernetes’ capabilities, allowing developers to encode domain knowledge for specific applications into software. An operator is a custom controller that manages applications and their parts. Operators follow Kubernetes principles, notably the control loop, to maintain an application in a certain state. They are particularly useful for complex stateful applications that have specific maintenance, scaling, and backup needs.

For example, an operator could manage a database application by handling tasks such as provisioning instances, taking backups, and handling upgrades.

What is the difference between Kubernetes and Docker?

Now that we understand the architecture and operational methods of Kubernetes, let’s address a common question about containerization: In which cases do we need Kubernetes, in which cases Docker, and are they interchangeable?

Kubernetes and Docker are essential DevOps tools, but they serve different purposes and complement each other in application development and deployment. Both Docker and Kubernetes are often used simultaneously; they are developed to complement each other rather than compete. While Docker focuses on the containerization and initial management of applications, such as container creation and configuration, Kubernetes manages the lifecycle and scalability of these containers within larger systems.

Docker overview

Docker is an open platform designed to create, deploy, and manage applications that are packaged into containers. These containers are self-sufficient units capable of running both in cloud and on-premises environments. Docker allows applications to run in isolated parts of the system, enabling them to share a common operating system as if each had its own separate OS.

Docker key features are:

- Fast and easy setup.

- Higher performance compared to application run in virtual machines.

- Isolation of applications by containerization.

- Enhanced security management through features like image signing, resource limitations, network segmentation, security scanning, and runtime security measures.

Kubernetes overview

In contrast, Kubernetes is primarily a container scheduler that focuses on the scaling and deployment of applications. It was specifically developed to operate within a cluster environment, unlike Docker, which is optimized for single-node operations (note, however, that today Docker also has the swarm mode, which serves as a container orchestrator). Kubernetes necessitates a container runtime environment for orchestration.

What are the applications of Kubernetes in IT?

While Kubernetes has become the standard for container management, it is also employed for a variety of other applications.

Large-scale app deployment: High-traffic websites and cloud applications handle millions of user requests every day. Kubernetes is particularly effective in these environments due to its autoscaling capabilities, which automatically adjust application capacity to efficiently match demand fluctuations, minimizing downtime. It employs horizontal pod autoscaling to manage loads based on CPU usage or custom metrics, effectively handling traffic spikes, hardware failures, or network disruptions. It’s important to distinguish this application from Kubernetes’ vertical pod autoscaling, which increases resources such as memory or CPU for existing pods.

High-performance computing (HPC): Industries such as government, science, finance, and engineering rely on HPC to perform complex calculations on large data sets at high speeds. These calculations enable immediate data-driven decisions for activities such as stock trading, weather forecasting, DNA sequencing, and flight simulation. Kubernetes helps distribute HPC tasks across hybrid and multi-cloud environments and supports batch job processing, enhancing the portability of data and code within these demanding workloads.

AI and machine learning: Deploying AI and machine learning systems involves managing vast amounts of data and complex computational processes. Kubernetes simplifies this by automating the management and scaling of machine learning life cycles. It also speeds up the deployment of large language models used in natural language processing tasks such as text classification and machine translation. As generative AI becomes more prevalent, Kubernetes ensures these models are run efficiently, offering high availability and fault tolerance.

Microservices management: In microservices architecture, an application is divided into numerous smaller, independently deployable services, each with its own API. This approach is typical in large retail e-commerce platforms, which might include services for orders, payments, shipping, and customer support. Kubernetes handles the complexity of managing these services simultaneously, providing high availability and self-healing capabilities to ensure continuous operation and quick recovery from failures.

Hybrid and multi-cloud deployments: Kubernetes is designed to operate across different environments, making it easier for organizations to migrate applications from on-premises to hybrid or multi-cloud settings. It standardizes deployment processes and abstracts away the underlying infrastructure details, enabling applications to move seamlessly between different clouds or data centers.

Enterprise DevOps: For enterprise DevOps teams, the ability to rapidly update and deploy applications is crucial for maintaining business agility. Kubernetes supports these efforts with its API interface, which allows developers and other stakeholders straightforward access to deploy, update, and manage their container ecosystems. Furthermore, Kubernetes plays an important role in cloud-native CI/CD pipelines by automating container deployment and optimizing resource use across cloud infrastructures, essential for continuous integration and delivery.

How are big companies using Kubernetes?

Among the companies that have adopted Kubernetes are Google, The New York Times, Adidas, Tinder, Spotify, Airbnb, and many others. In this section, we describe some of the case studies found on the official Kubernetes website.

Spotify

Launched in 2008, this audio-streaming platform grew to over 200 million monthly active users worldwide. Initially, Spotify utilized Docker and microservices managed by its proprietary system, Helios. By late 2017, the limitations of maintaining Helios with a small team became apparent, prompting a shift towards Kubernetes. This migration, initiated in 2018, allowed the company to run Kubernetes alongside Helios, ensuring a smooth transition process. The adoption of the orchestration platform significantly enhanced operational efficiency, speeded up service deployment from hours to mere minutes, and improved CPU utilization dramatically. The largest service running on Kubernetes handles around 10 million requests per second and benefits from significant autoscaling, enhanced CPU utilization, and multi-tenancy capabilities.

The New York Times

Several years ago, The New York Times began transitioning away from traditional data centers, initially moving smaller, less critical applications to public cloud virtual machines. The adoption of the Google Cloud Platform and Kubernetes-as-a-service, GKE, dramatically improved the efficiency of their infrastructure. Deployment times were reduced from 45 minutes to just seconds or a couple of minutes. Additionally, the integration of Cloud Native Computing Foundation technologies streamlined deployment processes across the engineering team. This allowed for more frequent and independent updates, enhancing operational agility and system portability.

Pinterest

After eight years of growth, Pinterest had expanded to 1,000 microservices with a complex infrastructure and diverse setup tools and platforms. In 2016, they initiated a roadmap toward a new computing platform. The objective was to provide the fastest path from an idea to production while abstracting away the complexities of the underlying infrastructure. The first step involved transitioning services to Docker containers, which were fully deployed by early 2017. Subsequently, they decided to shift to orchestration to enhance efficiency and manage services in a decentralized manner, which led to the adoption of Kubernetes. The move to Kubernetes enabled on-demand scaling and new failover policies and simplified the deployment and management of complex systems such as Jenkins. This transition not only reduced build times but also significantly increased efficiency, with the team reclaiming over 80% of capacity during non-peak hours and reducing instance-hours by 30% daily in their Jenkins Kubernetes cluster compared to the previous static setup.

Adidas

Adidas faced significant delays in accessing its software tools. The processes for acquiring developer resources were cumbersome and slow, sometimes taking up to a week. To streamline these operations and speed up project deployment, Adidas decided to adopt a developer-centric approach. This led them to implement containerization, agile development, continuous delivery, and a cloud native platform incorporating Kubernetes and Prometheus. Within just six months, the entire Adidas e-commerce platform was operational on Kubernetes. This transition not only decreased the website load time by half but also increased release frequency from every four to six weeks to multiple times daily. Today, Adidas operates 4,000 pods, 200 nodes, and processes 80,000 builds per month, with 40% of its critical systems running on this advanced cloud-native platform.

Conclusion

The use of Kubernetes as a container orchestrator has been rapidly increasing. By streamlining deployment, scaling, and management of containerized applications, this platform offers a robust solution for enterprises looking to enhance their operational efficiencies and integrate modern technological capabilities.

In this article, we have walked through the main concepts and operating principles of Kubernetes. If you want to learn more about it, the best way is to start containerizing earlier projects earlier and try deploying them.

If you are looking for an external Kubernetes team, Serokell is ready to offer an efficient solution or provide consultation on its adoption.

.jpg)

.jpg)

.jpg)

.jpg)