In this article, I interview Sergei Markov, the chief of the SberDevices experimental machine learning systems department.

We talk about one of Sber’s latest projects in machine learning – ruDALL-E, an image generation model. We also discuss the open-source culture at Sber and how machine learning programming and models like ruDALL-E can be used for design and art.

Interview with Sergei Markov

Could you please describe the ruDALL-E project for our readers?

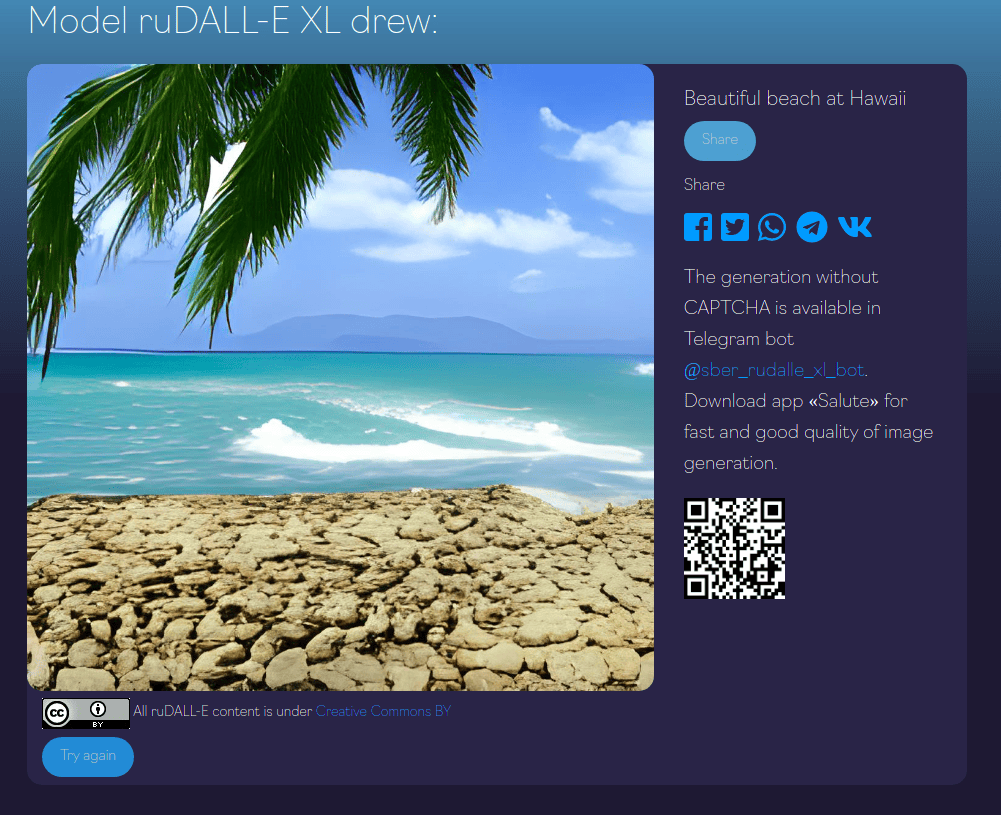

ruDALL-E is an open-source neural network that can generate images from texts. It can create infinitely many original images from text prompts, and it also can continue drawing existing images. You can try it yourself – the demo is available at rudalle.ru.

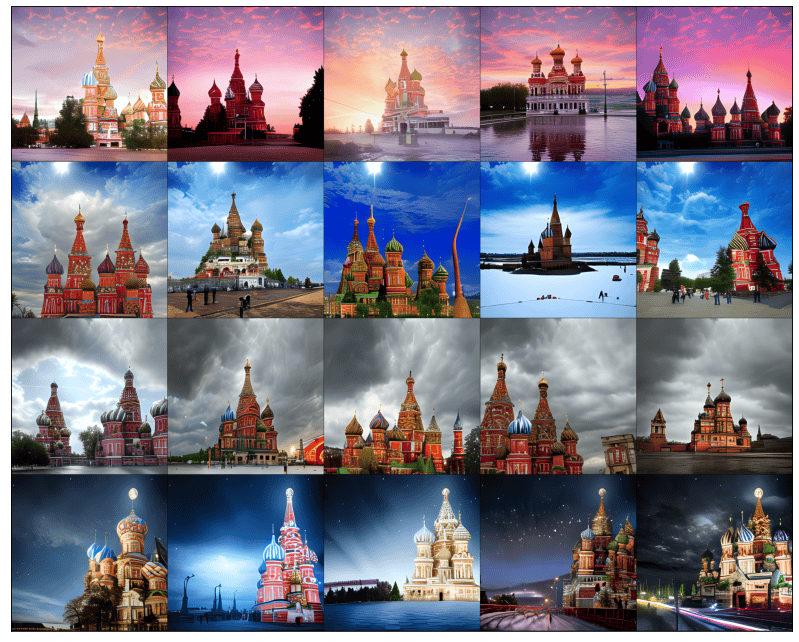

The demo works with automatic translation to Russian since the model is originally trained on Russian language data. The model is considered the greatest computational project in Russia for now, totaling 24,256 GPU days to train the models.

Of course, the model inherits some things specific to Russian: for example, common phrases and associations. But in general, it can generate any concept, for example, draw landscapes, art concepts, common objects.

What are the difficulties in generating images from textual descriptions, and how does a transformer architecture help solve that?

In 2021, we now have a lot of generative architectures with different capabilities – StyleGANs, VQ-GAN, etc. – but there are still few models that allow out-of-domain generalization and zero-shot generation of any concept. In this case, transformer architectures are top dogs.

The architecture, which is described in OpenAI’s paper, is a decoder architecture, which generates 256×256 pixel images from text queries.

What is the connection between ruDALL-E and DALL-E, the AI project by OpenAI? Could you describe the differences between these two?

At Sber, we are closely following new scientific publications in the field of deep learning.

In the ruDALL-E project, we have paid specific attention to exploring the best generation parameters. Thus, we have incorporated the following 3-step process:

- The generative decoder gets the text query, tokenizes it with ruGPT-3 small tokenizer (128 tokens max), then decodes it line by line in a 32×32 pixel image, then the picture is reconstructed to 256×256 pixels.

- The generation is repeated several times with different hyperparameters (top p, top k), then the ranging model (ruCLIP model, also open-source) decides which image is the most relevant for the text query.

- Finally, the superresolution model enlarges the image up to 1024×1024 pixels.

The Colab-friendly pipeline is available on GitHub and works as a one-liner of code.

Are there any benefits that ruDALL-E has over DALL-E?

First of all, there are a few things going for the DALL-E model that make it attractive to recreate it and explore the limits of technology. In the OpenAI blog, we have seen the cherry-picked yet absolutely stunning examples of the model’s possibilities.

In contrast to DALL-E, ruDALL-E is open-source with Apache 2.0 license. Both the code and model checkpoints are available, which makes it possible for the community to continue further development:

- We have already seen the first experiments in image prompting. (A text string is used together with an image prompt, and the resulting generation is influenced by both the text query and the image).

- Fine-tuning the model is widely used for special domains: art concepts for game design, fashion design, customizing photo stocks.

The code for model training and inference is available in our repo. There are two major changes in the original architecture: 1) the replacement of the original dVAE autoencoder with our custom SBER VQ-GAN (it performs better on human faces), and 2) the inclusion of the superresolution ANN into the model pipeline.

Stay tuned for an upcoming arXiv report!

How big of a role does open source play in Sber?

We believe that open source is essential for scientific progress. This includes not only deep learning but science in general: reproducing results of other studies, testing them for possible bugs and vulnerabilities, fixing the errors, and introducing better solutions. Being out of this cycle is fraught with delays.

Communities are also a great help for state-of-the-art development and can reciprocate with great solutions. This has also happened for us not even once: this October we received a pull request on GitHub that speeded up the inference of the decoder models by up to 10 times! We have implemented it in both ruDALL-E and ruGPT-3 generation by now.

How did you decide to adopt the idea of DALL-E for the Russian market? Could you describe the process of adoption? Where did you source the data from?

Oh, we hope we are not only adopting the idea for the Russian market. This is, by no means, the first model like this to be open-sourced and publicly available. We don’t know for sure why OpenAI hasn’t shown its work in a more reproducible way. But this step is definitely done to stimulate the further openness and progress of such models.

For this project, we have collected 120 million pairs of images and text queries from web sources, various CV datasets, Kaggle, classic art, and so on. The model now exists in two versions, each of a different size:

- ruDALL-E XXL with 12.0 billion parameters

- ruDALL-E XL with 1.3 billion parameters

Sber previously worked on an alternative version of GPT-3. Did the work done on this initiative help you when working on ruDALL-E?

Yes, actually, it was quite the same team that created the ruGPT-3 model. See our main repo, it is still our most popular project. The initial training code was mostly based on a branch of the ruGPT-3 model.

Creating a team of ML specialists in Russia is quite an uphill climb: we are aiming to be incorporated into the competitive DL-research process and participate in it on an equal footing with the teams of Facebook Research, DeepMind, Google, and, clearly, OpenAI.

All in all, we have an enthusiastic team of researchers from SberDevices, Sber AI, Samara University, AI Research Institute, and SberCloud, who all actively contributed.

Are there any areas where the ruDALL-E neural network can be useful? Have you been informed of any cool use cases?

We follow the #ruDALLE hashtag on Twitter – and there are plenty of new implementations!

Mostly, there are game design concepts, as mentioned above, but also some interesting model fine-tunes for design.

For example, a developer has fine-tuned the model to generate new sneaker models:

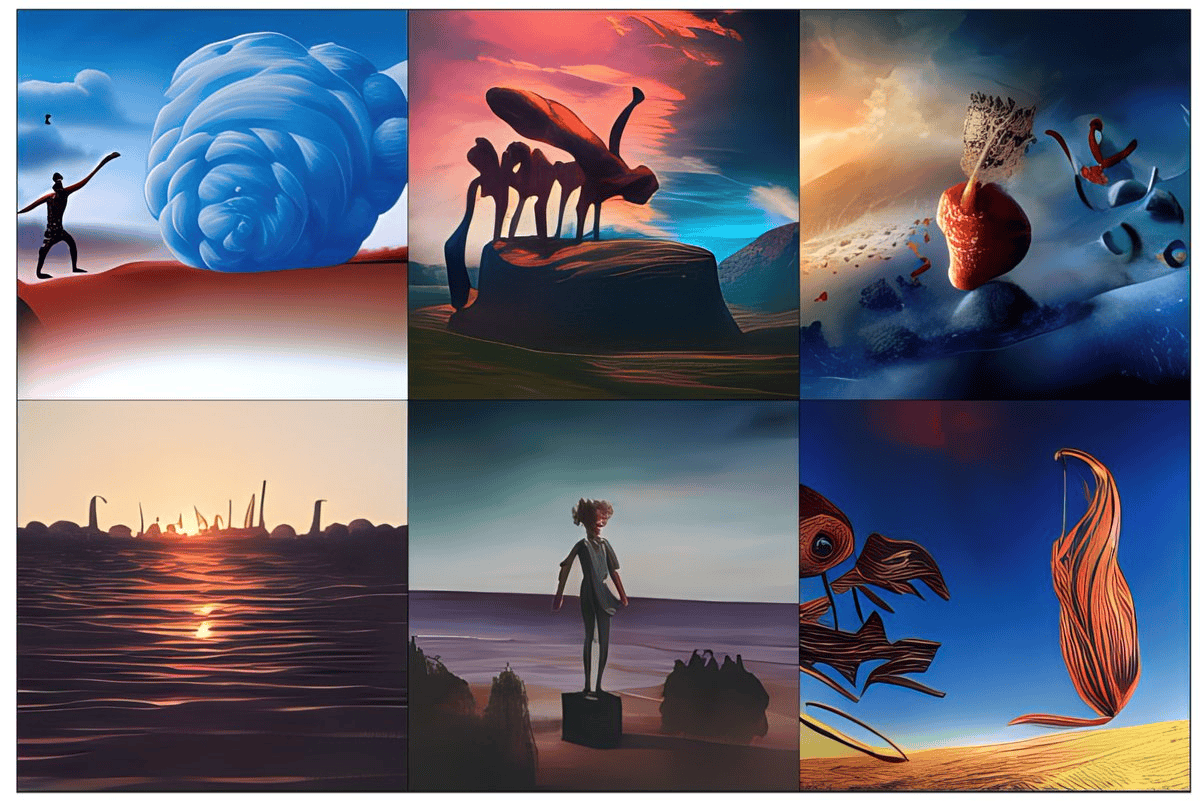

Without any model tuning, you can use image prompts to change the generation results:

What do you think about the rise of generative AI art? Is ruDALL-E getting used for this purpose?

We are absolutely for it! Of course, it is debatable whether the output of generative transformers can always be considered art, nevertheless, models like ruDALL-E can be easily implemented in concept generation and auxiliary tools for artists.

Also, who is then considered the artist – the model or the developer of the model?

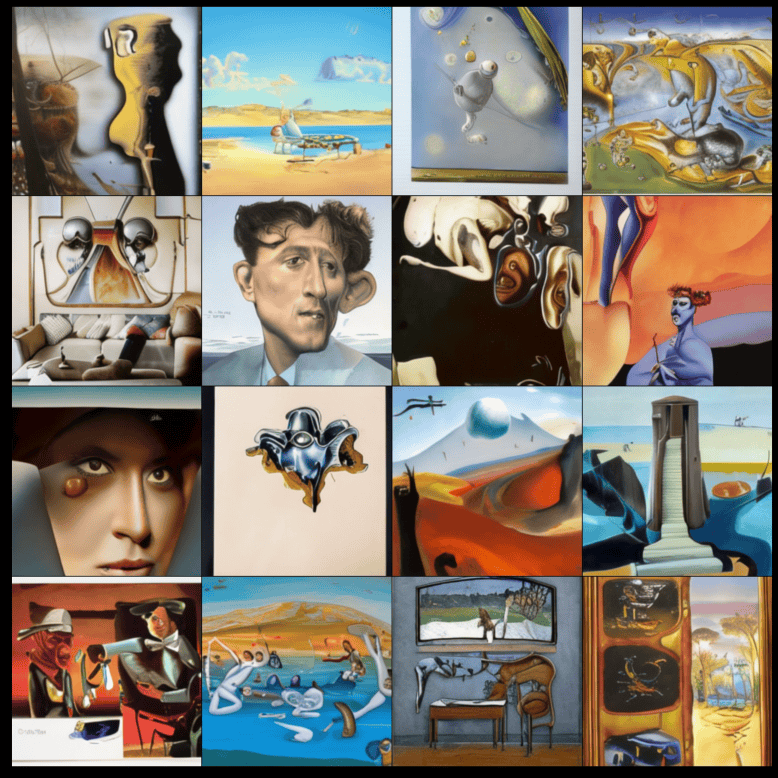

Some other pictures that Salvador Dali didn’t draw:

Will ruDALL-E be integrated with any Sber products like the Salute voice assistant?

ruDALL-E is now incorporated as a skill in the Salute app. If you ask the assistant to draw you a picture, it will use the model.

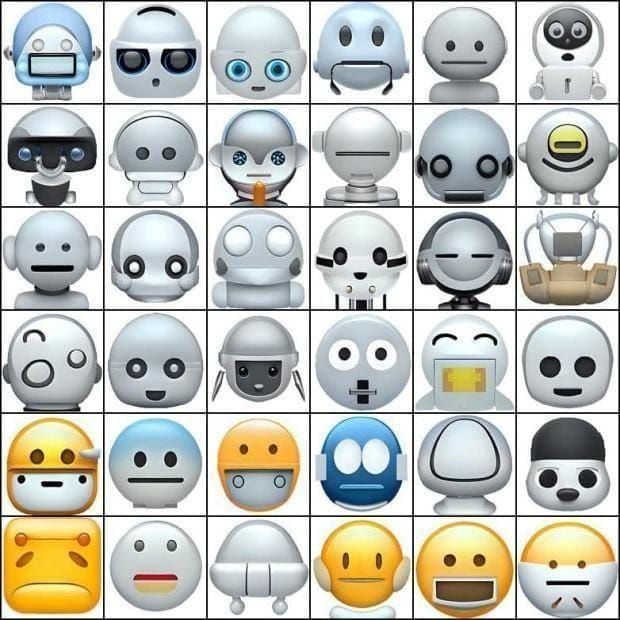

We have now also implemented the first official fine-tune of the model – Emojich (e.g. Malevich for Emoji). Emojich can be used to generate custom emoji and stickers.

You can try out Emojich via the Telegram bot or the demo website.

Did you run into any difficulties while developing ruDALL-E? Are there some cool stories you would want to share with other machine learning developers?

There are several such stories. So, initially, we measured our progress between epochs using test prompts of varying complexity, the most indicative of which were cats. The first iterations gave us a lot of bad cats: see the results of “a cat with a red ribbon” below.

In the first picture, the cat has three ears, in the second, it’s an odd shape, and in the third, it’s a little out of focus. We have much better cats now.

More complex concepts included a combination of different requirements, for example, color, style, the position of objects on the picture.

Secondly, we have created the “dallean” language. This includes artifacts we get in the generation when the model tries to generate an image with text. Images from “text in the wild” kind of data resulted in quasi-letters that we sometimes get during generation. They do not yet correlate much with the text of the query, but we hope to fix that with new decoder parameters.

We still have a lot to do to achieve a better and real-like generation of the concepts, though some of them in natural language can be pictured very vaguely. The very idea that you can ask the model to combine different concepts that do not exist in the real world gives us new space for imagination.

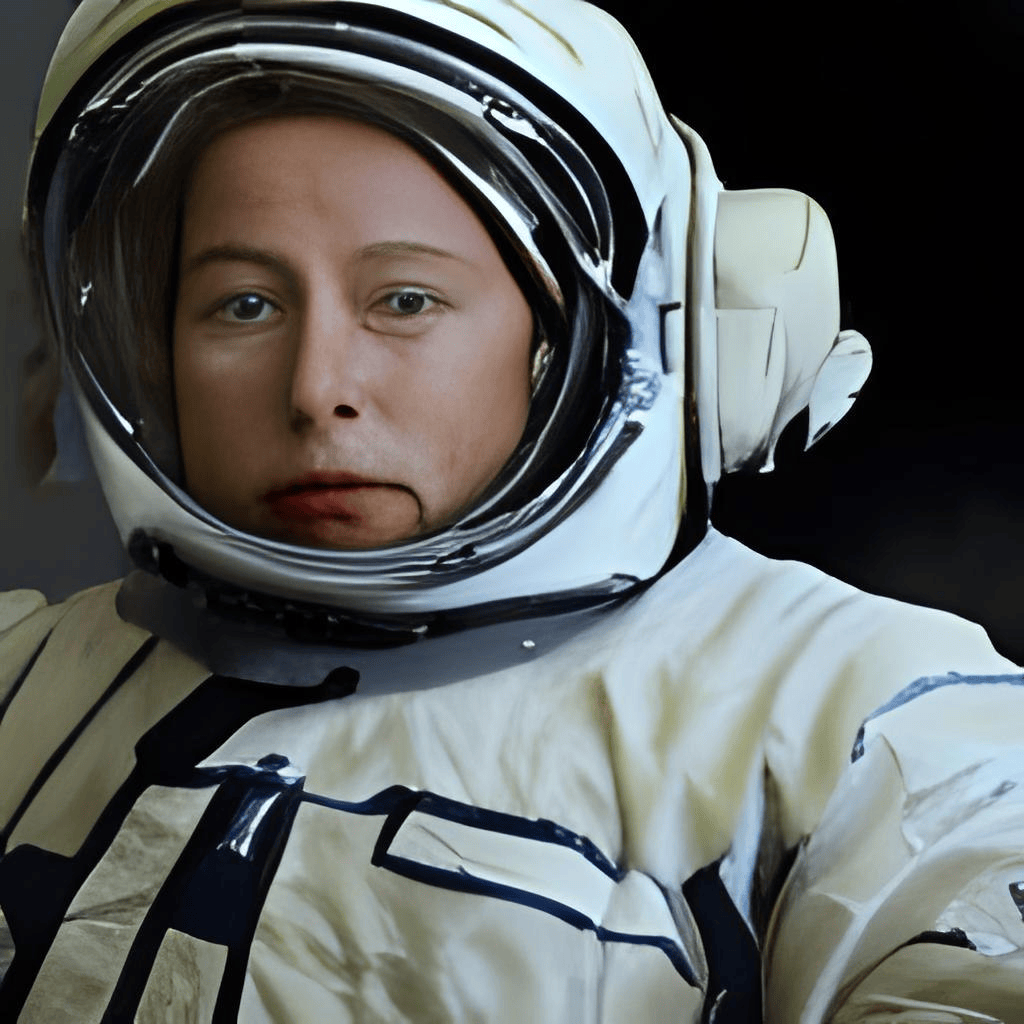

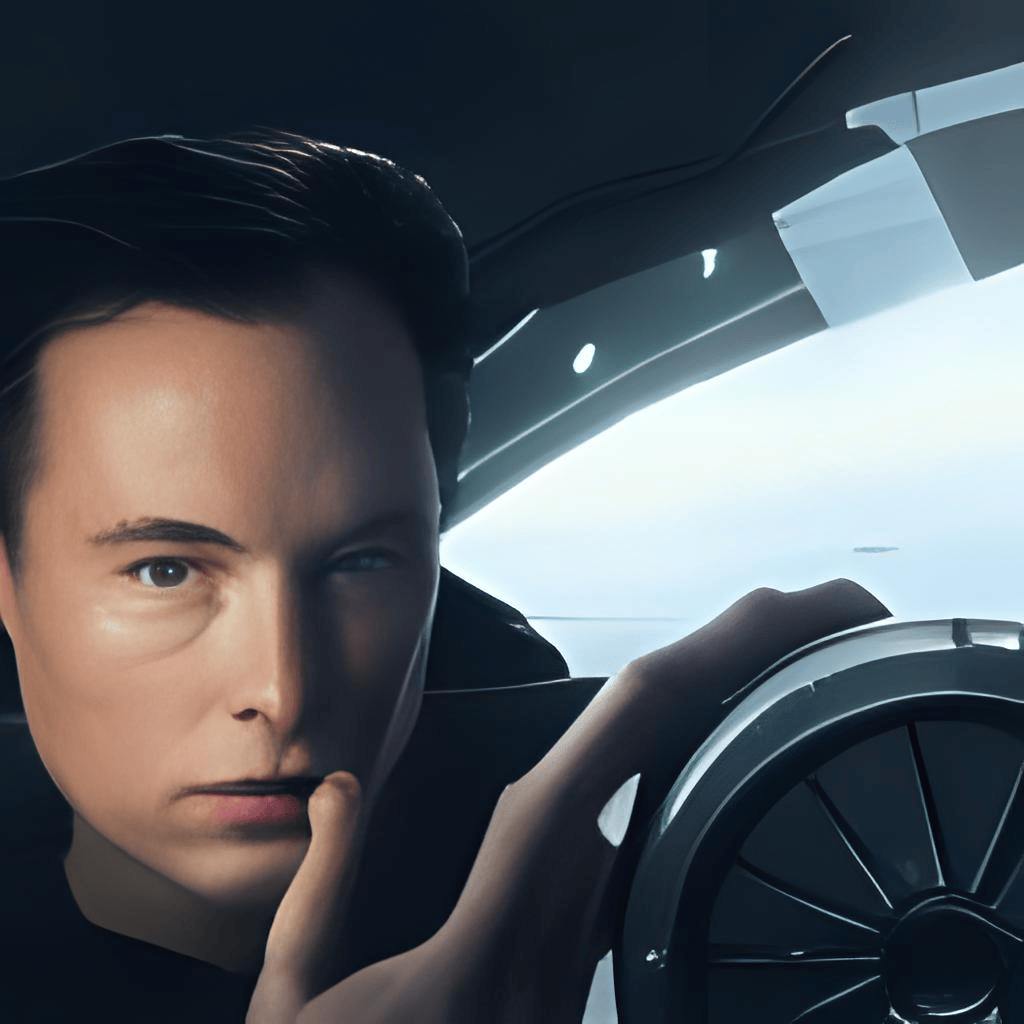

For example, one of our latest tests shows that we can generate quite a realistic combination of famous people in new circumstances and even films that they haven’t starred in. Some neural Elons we have:

How can other companies use the ruDALL-E neural network?

For B2B inquiries we propose ready-to-go cloud-based deployments of the model: see the ML Space service on the Christofari cluster. Model usage is free, companies pay only for the GPU minutes they spend running the models.

We have the same approach for the ruGPT-3 model and other transformers for the Russian language – see ruGPT-3 and family.

How big of a role do ethics of AI play in SberDevices research? Do you see any possible problems for image generation models trained on data from the open internet in this regard?

The model itself cannot be considered a finalized product, rather, it is a piece of technology that can be implemented in new products. In this sense, of course, the model is a bearer of possible prejudices that were encountered in the data.

We are now working to create new benchmarks suitable for models of similar architectures, and the inclusion of ethical tests is standard for us when testing models, both open-source and internal development.

What are the future plans of the SberDevices team?

Multimodal models create the landscape of future research. This definitely includes new benchmarks, new challenging architectures, and new tasks that were considered unsolvable. Keeping in mind the AI effect, we believe that multimodal neural networks will change the public perception of artificial intelligence, pushing the bar even higher. And for us it will be a new challenge.

I would like to thank Sergei for the interview. If you want to try out generating your own art or designs with ruDALL-e, you can check out it’s demo on ruDALL-e’s website.

If you would like to read more interviews like this, don’t forget to follow us on Twitter, Medium, and DEV.

.png)

.jpg)

.jpg)

.jpg)

.jpg)