Unsupervised learning is a type of machine learning that relies less on human guidance and intervention and more or analyzing raw data and extracting patterns from it. It’s thanks to unsupervised machine learning that today we have so many powerful ML applications such as generative AI systems, search engines, and recommendation systems.

In this article, you will learn about how unsupervised learning works and what techniques you can use to build your own ML model.

The definition of unsupervised learning

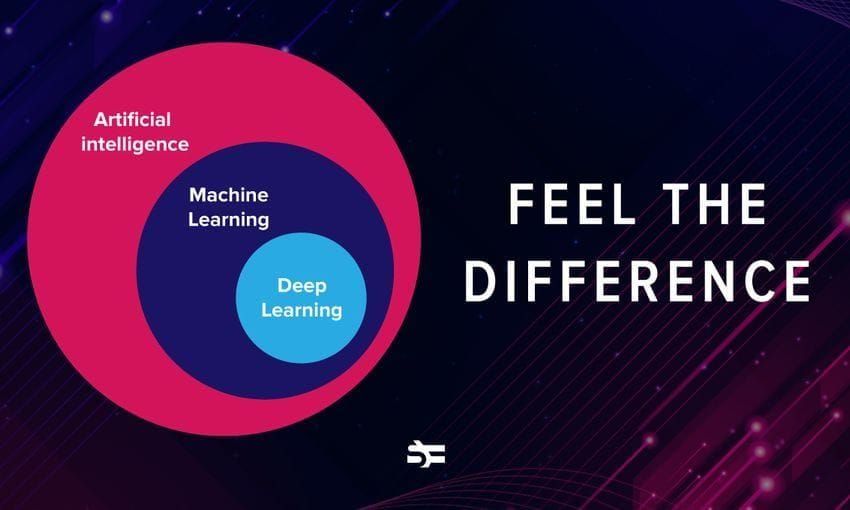

Unsupervised learning is a type of machine learning where the algorithm is presented with unlabeled data and is tasked with finding hidden patterns, relationships, or structures within that data.

The algorithm explores the data without explicit guidance on what to look for or how to interpret it. This makes unsupervised learning particularly valuable when dealing with large and unstructured datasets, or in situations where the ML engineer might not know what they expect to discover in data, as the algorithm can uncover insights that may not be apparent through manual analysis.

How does unsupervised learning work?

An unsupervised learning algorithm requires the same components as other machine learning algorithms. These include data to learn from (lots of data!), as well as a model, which is the mathematical representation of the relationship between input features and output labels. We’ve covered the key components of machine learning in the article dedicated to supervised learning.

An unsupervised learning model requires training, optimization, and deployment, like any other model does.

The training process in unsupervised learning typically involves adjusting the model parameters iteratively until it converges to a solution that captures the underlying structure of the data. Evaluation in unsupervised learning can be more challenging compared to supervised learning since there are no predefined labels. To increase the accuracy and reduce the amount of data that is required to train unsupervised learning models effectively, ML engineers apply combinated approaches. This is called semi-supervised learning.

Unsupervised learning is widely used in various domains, such as data exploration, pattern recognition, and feature learning, and it can be a crucial step in preparing data for further analysis or supervised learning tasks.

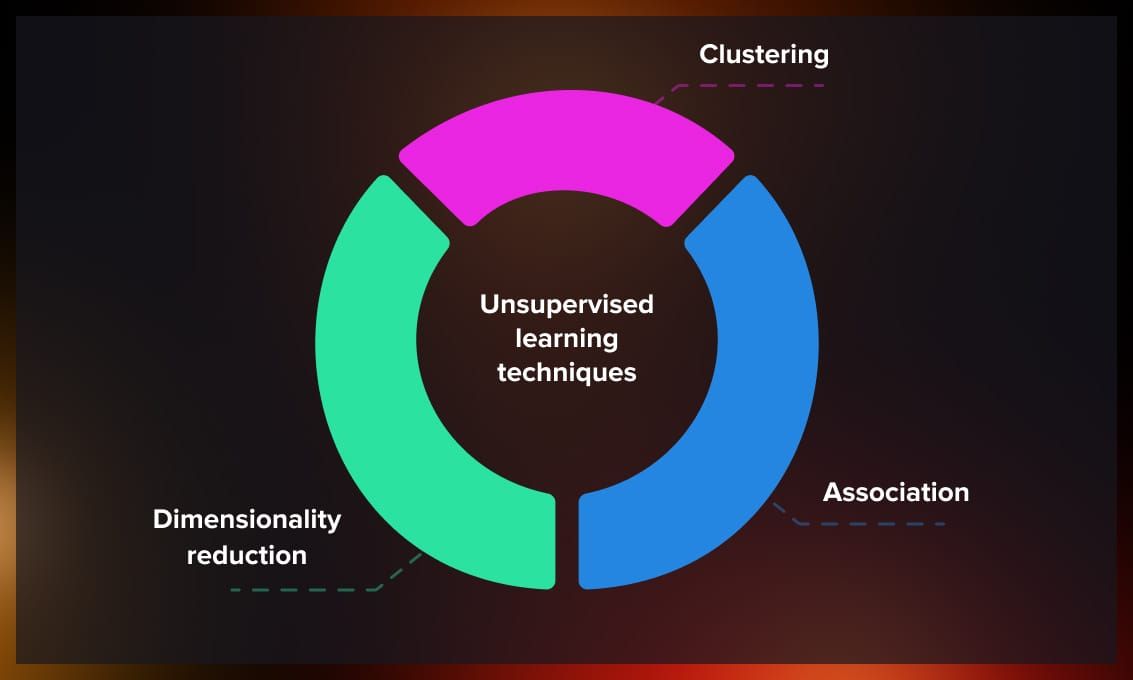

Unsupervised learning techniques

There are several techniques that are widely applied in unsupervised learning depending on the task and data sample size.

Clustering

Clustering is a technique in unsupervised learning that involves grouping similar data points together based on certain characteristics or features. The primary goal is to discover natural groupings or patterns within the data without prior knowledge of the class labels. There are several clustering techniques, each with its own strengths and use cases:

1. K-means clustering

Iteratively assigns data points to the nearest cluster centroid and updates the centroids until convergence. Tries to minimize the sum of squared distances between data points and their assigned cluster centroids.

Applications: Customer segmentation and image compression.

2. Hierarchical clustering

Can be used to build a hierarchy of clusters in a tree-like structure (dendrogram). Starts with each data point as a singleton cluster and iteratively merges or agglomerates the closest clusters until all points belong to a single cluster.

Applications: Taxonomy construction and evolutionary biology.

3. DBSCAN (Density-Based Spatial Clustering of Applications with Noise)

It’s used to identify clusters as dense regions separated by sparser areas in the data space.

The model assigns each data point as a core point, border point, or noise point based on density criteria, and then connects core points to form clusters.

Applications: Anomaly detection in network security and geospatial analysis.

4. Mean shift

Can be used to find dense regions in the data space by shifting centroids toward areas of higher data point density. It iteratively moves centroids to regions of higher data point density until convergence.

Applications: Image segmentation and object tracking in video.

5. Gaussian mixture model (GMM)

The algorithm models the data as a mixture of several Gaussian distributions. It uses the expectation-maximization (EM) algorithm to iteratively estimate parameters such as mean, covariance, and weights of Gaussian components.

Applications: Speech recognition and financial fraud detection.

Choosing the appropriate clustering algorithm depends on the nature of the data and the desired outcomes. It’s often a good practice to try multiple algorithms and evaluate their performance using metrics such as silhouette score or Davies-Bouldin index. Additionally, visualizations like scatter plots or dendrograms can aid in understanding the structure of the clusters.

Association

Association rule learning is a type of unsupervised learning that focuses on discovering patterns, or associations within a dataset. The primary goal is to identify rules that describe the dependencies or associations between different variables or items in the data. This technique is commonly used in market basket analysis, where the objective is to find relationships between products that are frequently purchased together.

The Apriori algorithm is a classic example of an association rule learning algorithm. It follows the “apriori” principle, which states that if an itemset is frequent, then all of its subsets must also be frequent. The algorithm iteratively explores and prunes the search space of itemsets based on support thresholds, gradually building larger and larger sets of frequent items.

Before applying the Apriori algorithm, transaction data is typically encoded into a binary format, where each row represents a transaction, and each column represents an item. A ‘1’ indicates the presence of an item in a transaction, and ‘0’ indicates its absence.

Association rule learning can become computationally expensive with large datasets. Techniques like pruning infrequent itemsets and using more efficient data structures help manage the complexity.

Association rule learning is a valuable tool for extracting meaningful patterns from transactional data. However, it is essential to interpret the discovered rules in the context of the specific application and domain knowledge, as not all associations may be actionable or meaningful.

Dimensionality reduction

Dimensionality reduction is used in unsupervised learning as a method to reduce the number of features or variables in a dataset. The high-dimensional nature of data can lead to challenges such as increased computational complexity, and difficulties in visualization. Dimensionality reduction methods aim to overcome these challenges by transforming the data into a lower-dimensional space, capturing the most relevant information.

Watch this video to learn more about dimensionality reduction.

Now, let’s explore some key techniques related to dimensionality reduction:

1. Principal component analysis (PCA)

Finds a set of orthogonal axes (principal components) along which the data exhibits the maximum variance. PCA transforms the original features into a new set of uncorrelated features, ranked by the amount of variance they capture.

Applications: Facial recognition and genomic data analysis.

2. t-Distributed stochastic neighbor embedding (t-SNE)

Maps high-dimensional data to a lower-dimensional space, preserving pairwise similarities between data points as much as possible. t-SNE minimizes the divergence between probability distributions that represent pairwise similarities in the original and lower-dimensional spaces.

Applications: Visualizing high-dimensional data and drug discovery.

3. Autoencoders

Learn a compressed, lower-dimensional representation of the input data by encoding and decoding it through a neural network architecture. Autoencoders consist of an encoder that maps input data to a lower-dimensional representation (latent space) and a decoder that reconstructs the input from this representation.

Applications: Anomaly detection in time series data and image denoising.

4. Linear discriminant analysis (LDA)

Finds the linear combinations of features that maximize the separation between classes in a supervised context. LDA considers both within-class scatter and between-class scatter to find discriminative axes in a lower-dimensional space.

Applications: Feature extraction for classification tasks, especially when there are known class labels.

5. Isomap (Isometric Mapping)

Preserves the geodesic distances between all pairs of data points in the lower-dimensional space. Constructs a graph representing the neighborhood relationships between data points and then embeds the data into a lower-dimensional space.

Applications: Non-linear dimensionality reduction, particularly useful for capturing the intrinsic geometry of the data.

Choosing the right dimensionality reduction technique depends on the characteristics of the data, the specific goals of the analysis, and the computational resources available. It’s important to note that while dimensionality reduction can be beneficial, it also involves trade-offs, and careful consideration should be given to the impact on interpretability and the task at hand.

Applications of unsupervised learning

Unsupervised learning finds applications in a variety of scenarios where extracting patterns, relationships, or structures from unlabeled data is valuable. Here are some real-life examples:

- Customer segmentation. Businesses often use clustering algorithms like K-Means to segment customers based on their purchasing behavior, demographics, or preferences. For instance, an e-commerce platform may use customer segmentation to tailor marketing strategies for different segments, improving customer engagement and satisfaction.

- Anomaly detection. Unsupervised learning algorithms, such as Isolation Forests or One-Class SVM, are employed for identifying unusual patterns or outliers in data. For example, in cybersecurity, anomaly detection can be used to detect unusual network activity that may indicate a security breach or intrusion.

- Market basket analysis. Association rule learning, like the Apriori algorithm, is utilized to discover relationships between products frequently purchased together. For example, retailers use market basket analysis to optimize product placements, recommend complementary items, and enhance the overall shopping experience.

- Image and video compression. Dimensionality reduction techniques like Principal Component Analysis (PCA) or autoencoders are employed for compressing image and video data. Video streaming services use compression algorithms to reduce the amount of data transmitted over networks without significant loss of quality.

- Topic modeling in text data. Latent Dirichlet Allocation (LDA) and Non-Negative Matrix Factorization (NMF) are used for discovering latent topics in large text datasets. News organizations use topic modeling to categorize articles, making it easier to organize and recommend relevant content to users.

- Genomic data analysis. Unsupervised learning is applied to genomic data for clustering genes or identifying patterns in DNA sequences. For example, researchers use clustering algorithms to identify groups of genes that may be co-expressed or functionally related, aiding in the understanding of biological processes.

- Fraud detection. Anomaly detection algorithms are employed in finance to identify unusual patterns or transactions that may indicate fraudulent activities. Credit card companies use unsupervised learning to detect abnormal spending patterns or transactions that deviate from a customer’s typical behavior.

- Neuroscience and brain signal analysis. Clustering and dimensionality reduction techniques are used to analyze brain signals and identify patterns in neuroscience data. Unsupervised learning helps researchers discover functional brain regions, understand neural connectivity, and analyze patterns in electroencephalogram (EEG) data.

- Recommendation systems. Collaborative filtering and clustering algorithms are used in recommendation systems to suggest products, movies, or content based on user behavior. Streaming platforms use unsupervised learning to recommend movies or TV shows based on the viewing history and preferences of users with similar profiles.

Unsupervised learning can be applied to practically any field where big data is used. In the future, we’re likely to see new interesting applications of such techniques.

Conclusion

By allowing machines to learn without explicit guidance, unsupervised learning opens the door to a wide array of applications, from anomaly detection and clustering to dimensionality reduction and feature learning. It may pave the way for more sophisticated and efficient solutions, where machines not only understand but also interpret the intricacies of the complex data landscapes they navigate.

Read more: